“Fancy a card game, Johnny?”

“Sure, Jennie, deal me in. Wot’re we playin’?”

“Gin rummy sound good?”

Great idea, and it fits right in with our current Entanglement theme. The aspect of Entanglement that so bothered Einstein, “spooky action at a distance,” can be just as spooky close-up. Check out this magic example — go ahead, it’s a fun trick to figure out.

Spooky, hey? And it all has to do with cards being two-dimensional. I know, as objects they’ve got three dimensions same as anyone (four, if you count time), but functionally they have only two dimensions — rank and suit.

When you’re looking at a gin rummy hand you need to consider each dimension separately. The queens in this hand form a set — three cards of the same rank. So do the three nines. In the suit dimension, the 4-5-6-7 run is a sequence of ranks all in the same suit.

A physicist might say that evaluating a gin rummy hand is a separable problem, because you can consider each dimension on its own. <Hmm … three queens, that’s a set, and three nines, another set. The rest are hearts. Hey, the hearts are in sequence, woo-hoo!>

“Gin!”

If you chart the hand, the run and sets and their separated dimensions show up clearly even if you don’t know cards.

A standard strategy for working a complex physics problem is to look for a way to split one kind of motion out from what else is going on. If the whole shebang is moving in the z-direction, you can address the z-positions, z-velocities and z-forces as an isolated sub-problem and treat the x and y stuff separately. Then, if everything is rotating in the xy plane you may be able to separate the angular motion from the in-and-out (radial) motion.

But sometimes things don’t break out so readily. One nasty example would be several massive stars flying toward each other at odd angles as they all dive into a black hole. Each of the stars is moving in the black hole’s weirdly twisted space, but it’s also tugged at by every other star. An astrophysicist would call the problem non-separable and probably try simulating it in a computer instead of setting up a series of ugly calculus problems.

The card trick video uses a little sleight-of-eye to fake a non-separable situation. Here’s the chart, with green dots for the original set of cards and purple dots for the final hand after “I’ve removed the card you thought of.” The kings are different, and so are the queens and jacks. As you see, the reason the trick works is that the performer removed all the cards from the original hand.

The goal of the illusion is to confuse you by muddling ranks with suits. What had been a king of diamonds in the first position became a king of spades, whereas the other king became a queen. You were left with an entangled perception of each card’s two dimensions.

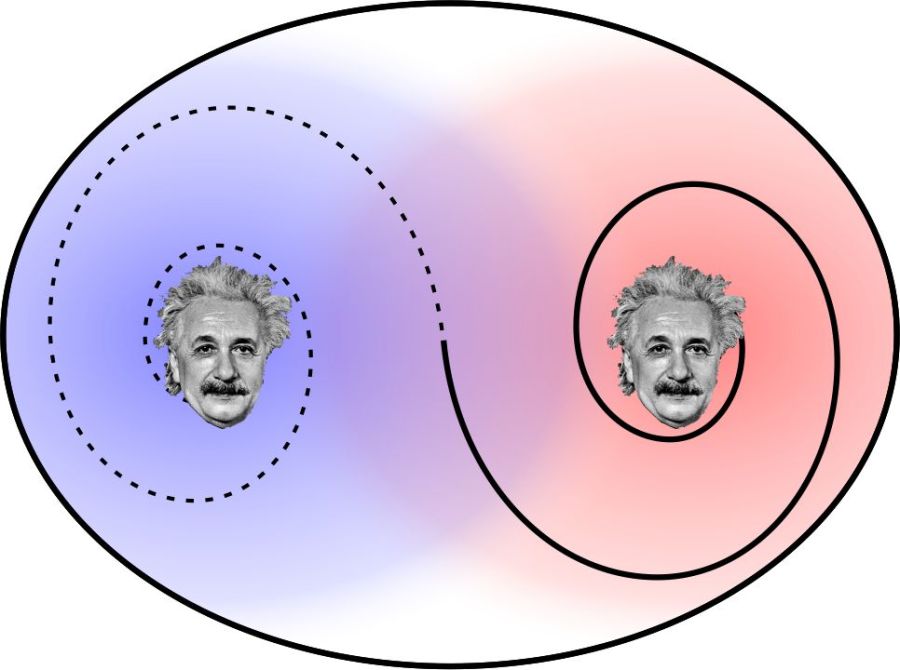

In quantum mechanics that kind of entanglement crops up any time you’ve got two particles with a common history. It’s built into the math — the two particles evolve together and the model gives you no way to tell which is which.

Suppose for instance that an electron pair has zero net spin (spin direction is a dimension in QM like suit is a dimension in cards). If the electron going to the left is spinning clockwise, the other one must be spinning counterclockwise. Or the clockwise one may be the one going to the right — we just can’t tell from the math which is which until we test one of them. The single test settles the matter for both.

Einstein didn’t like that ambiguity. His intuition told him that QM’s statistics only summarize deeper happenings. Bohr opposed that idea, holding that QM tells us all we can know about a system and that it’s nonsense to even speak of properties that cannot be measured. Einstein called the deeper phenomena “elements of reality” though they’re currently referred to as “hidden variables.” Bohr won the battle but maybe not the war — Einstein had such good intuition.

~~ Rich Olcott

Information transfer at infinite speed? Of course not, because neither hungry person knows what’s in either box until they open one or until they exchange information. Even Skype operates at light-speed (or slower).

Information transfer at infinite speed? Of course not, because neither hungry person knows what’s in either box until they open one or until they exchange information. Even Skype operates at light-speed (or slower).

Suppose you’re playing goalie in an inverse tennis game. There’s a player in each service box. Your job is to run the net line using your rackets to prevent either player from getting a ball into the opposing half-court. Basically, you want the ball’s locations to look like the single-node yellow shape up above. You’ll have to work hard to do that.

Suppose you’re playing goalie in an inverse tennis game. There’s a player in each service box. Your job is to run the net line using your rackets to prevent either player from getting a ball into the opposing half-court. Basically, you want the ball’s locations to look like the single-node yellow shape up above. You’ll have to work hard to do that.

For practice using Heisenberg’s Area, what can we say about the atom? (If you’re checking my math it’ll help to know that the Area, h/4π, can also be expressed as 0.5×10-34 kg m2/s; the mass of one hydrogen atom is 1.7×10-27 kg; and the speed of light is 3×108 m/s.) On average the atom’s position is at the cube’s center. Its position range is one meter wide. Whatever the atom’s average momentum might be, our measurements would be somewhere within a momentum range of (h/4π kg m2/s) / (1 m) = 0.5×10-34 kg m/s. A moving particle’s momentum is its mass times its velocity, so the velocity range is (0.5×10-34 kg m/s) / (1.7×10-27 kg) = 0.3×10-7 m/s.

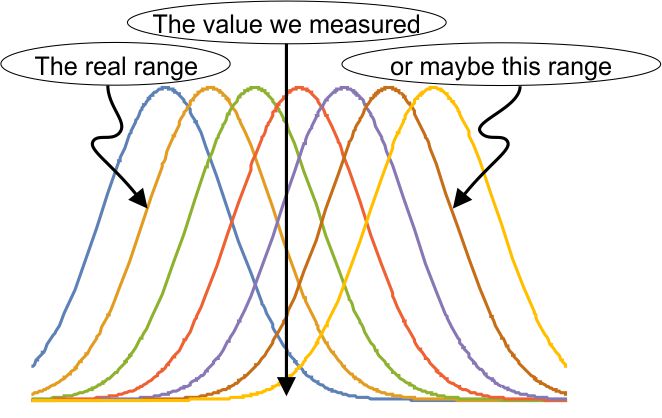

For practice using Heisenberg’s Area, what can we say about the atom? (If you’re checking my math it’ll help to know that the Area, h/4π, can also be expressed as 0.5×10-34 kg m2/s; the mass of one hydrogen atom is 1.7×10-27 kg; and the speed of light is 3×108 m/s.) On average the atom’s position is at the cube’s center. Its position range is one meter wide. Whatever the atom’s average momentum might be, our measurements would be somewhere within a momentum range of (h/4π kg m2/s) / (1 m) = 0.5×10-34 kg m/s. A moving particle’s momentum is its mass times its velocity, so the velocity range is (0.5×10-34 kg m/s) / (1.7×10-27 kg) = 0.3×10-7 m/s. elaborate mathematical structure. If the measurement is a quantum mechanical result, part of that structure is our familiar bell-shaped curve. It’s an explicit recognition that way down in the world of the very small, we can’t know what’s really going on. Most calculations have to be statistical, predicting an average and an expected range about that average. That prediction may or may not pan out, depending on what the experimentalists find.

elaborate mathematical structure. If the measurement is a quantum mechanical result, part of that structure is our familiar bell-shaped curve. It’s an explicit recognition that way down in the world of the very small, we can’t know what’s really going on. Most calculations have to be statistical, predicting an average and an expected range about that average. That prediction may or may not pan out, depending on what the experimentalists find. So there could be a collection of bell-curves gathered about the experimental result. Remember those

So there could be a collection of bell-curves gathered about the experimental result. Remember those