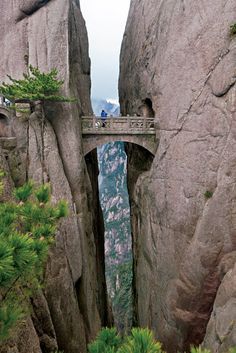

See that little guy on the bridge, suspended halfway between all the way down and all the way up? That’s us on the cosmic size scale.

See that little guy on the bridge, suspended halfway between all the way down and all the way up? That’s us on the cosmic size scale.

I suspect there’s a lesson there on how to think about electrons and quantum mechanics.

Let’s start at the big end. The physicists tell us that light travels at 300,000 km/s, and the astronomers tell us that the Universe is about 13.7 billion years old. Allowing for leap years, the oldest photons must have taken about 4.3×1017 seconds to reach us, during which time they must have covered 1.3×1026 meters. Double that to get the diameter of the visible Universe, 2.6×1026 meters. The Universe probably is even bigger than that, but far as I can see that’s as far as we can see.

At the small end there’s the Planck length, which takes a little explaining. Back in 1899, Max Planck published his epochal paper showing that light happens piecewise (we now call them photons). In that paper, he combined several “universal constants” to derive a convenient (for him) universal unit of length: 1.6×10-35 meters. It’s certainly an inconvenient number for day-to-day measurements (“Gracious, Junior, how you’ve grown! You’re now 8×1034 Planck-lengths tall.”). However, theoretical physicists have saved barrels of ink and hours of keyboarding by using Planck-lengths and other such “natural units” in their work instead of explicitly writing down all the constants.

Furthermore, there are theoretical reasons to believe that the smallest possible events in the Universe occur at the scale of Planck lengths. For instance, some theories suggest that it’s impossible to measure the distance between two points that are closer than a Planck-length apart. In a sense, then, the resolution limit of the Universe, the ultimate pixel size, is a Planck length.

So that’s the size range of the Universe, from 1.6×10-35 up to 2.6×1026 meters. What’s a reasonable way to fix a half-way mark between them?

So that’s the size range of the Universe, from 1.6×10-35 up to 2.6×1026 meters. What’s a reasonable way to fix a half-way mark between them?

It makes no sense to just add the two numbers together and divide by two the way we’d do for an arithmetic average. That’d be like adding together the dime I owe my grandson and the US national debt — I could owe him 10¢ or $10, but either number just disappears into the trillions.

The best way is to take the geometrical average — multiply the two numbers and take the square root. I did that. It’s the X in the sizeline, at 6.5×10-5 meters, or about the diameter of a fairly large bacterium. (In the diagram, VSC is the Vega Super Cluster, AG is the Andromeda Galaxy, and the numbers are those exponents of 10.)

That’s worth marveling at. Sixty orders of magnitude between the size of the Universe and the size of the ultimate pixel. Yet from blue whales to bacteria, Earth’s life just happens to occupy the half-dozen orders right in the middle of the range. We think that’s it.

Could this be another case of the geocentric fallacy? Humans were so certain that Earth was the center of the Universe, before Brahe and Galileo and Newton proved otherwise. Is there life out there at scales much larger or much smaller than we imagine?

Who knows? But here’s an intriguing physics/quantum angle I’d like to promote. We know a lot about structures bigger than us — solar systems and binary stars and galaxy clusters on up. We know a few sizes and structures a bit smaller — viruses and molecules and atoms. We’re aware of quarks and gluons that reside inside protons and atomic nuclei, but we don’t know their size or structure.

Even a proton is huge on the Planck-length scale. At 1.8×10-15 meters the proton measures some 1020 Planck-lengths. There’s as much scale-space between the Planck-length and the proton as there is between the Earth (1.3×107 meters) and the Universe.

It’s hard to believe that Terra infravita’s area has no structure whereas Terra supravita is so … busy. The Standard Model’s “ultimate particles,” the electrons and photons and neutrinos and quarks and gluons, all operate down there somewhere. It’s reasonable to suppose that they reflect a deeper architecture somewhere on the way down to the Planck-length foam.

Newton wrote (in Latin), “I do not make hypotheses.” But golly, it’s tempting.

~~ Rich Olcott

Keep going until the outermost hexagon has 32 dots along each edge. All the hexagons together will have exactly 2016 dots.

Keep going until the outermost hexagon has 32 dots along each edge. All the hexagons together will have exactly 2016 dots.

Newton was essentially a geometer. These illustrations (from Book 1 of the Principia) will give you an idea of his style. He’d set himself a problem then solve it by constructing sometimes elaborate diagrams by which he could prove that certain components were equal or in strict proportion.

Newton was essentially a geometer. These illustrations (from Book 1 of the Principia) will give you an idea of his style. He’d set himself a problem then solve it by constructing sometimes elaborate diagrams by which he could prove that certain components were equal or in strict proportion. For instance, in the first diagram (Proposition II, Theorem II), we see an initial glimpse of his technique of successive approximation. He defines a sequence of triangles which as they proliferate get closer and closer to the curve he wants to characterize.

For instance, in the first diagram (Proposition II, Theorem II), we see an initial glimpse of his technique of successive approximation. He defines a sequence of triangles which as they proliferate get closer and closer to the curve he wants to characterize. The third diagram is particularly relevant to the point I’ll finally get to when I get around to it. In Prop XLIV, Theorem XIV he demonstrates something weird. Suppose two objects A and B are orbiting around attractive center C, but B is moving twice as fast as A. If C exerts an additional force on B that is inversely dependent on the cube of the B-C distance, then A‘s orbit will be a perfect circle (yawn) but B‘s will be an ellipse that rotates around C, even though no external force pushes it laterally.

The third diagram is particularly relevant to the point I’ll finally get to when I get around to it. In Prop XLIV, Theorem XIV he demonstrates something weird. Suppose two objects A and B are orbiting around attractive center C, but B is moving twice as fast as A. If C exerts an additional force on B that is inversely dependent on the cube of the B-C distance, then A‘s orbit will be a perfect circle (yawn) but B‘s will be an ellipse that rotates around C, even though no external force pushes it laterally.

It all started with Newton’s mechanics, his study of how objects affect the motion of other objects. His vocabulary list included words like force, momentum, velocity, acceleration, mass, …, all concepts that seem familiar to us but which Newton either originated or fundamentally re-defined. As time went on, other thinkers added more terms like power, energy and action.

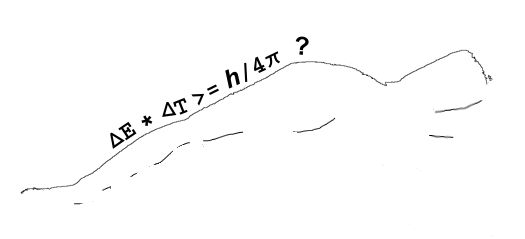

It all started with Newton’s mechanics, his study of how objects affect the motion of other objects. His vocabulary list included words like force, momentum, velocity, acceleration, mass, …, all concepts that seem familiar to us but which Newton either originated or fundamentally re-defined. As time went on, other thinkers added more terms like power, energy and action. There is another way to get the same dimension expression but things aren’t not as nice there as they look at first glance. Action is given by the amount of energy expended in a given time interval, times the length of that interval. If you take the product of energy and time the dimensions work out as (ML2/T2)*T = ML2/T, just like Heisenberg’s Area.

There is another way to get the same dimension expression but things aren’t not as nice there as they look at first glance. Action is given by the amount of energy expended in a given time interval, times the length of that interval. If you take the product of energy and time the dimensions work out as (ML2/T2)*T = ML2/T, just like Heisenberg’s Area.