Al was pouring my mugful of his morning blend (“If it doesn’t wake you up we’ll call the doctor“) when Jeremy stepped into the counter. “Hi, Mr Moire. I’m still trying to get my head around that virtual particle thing. Hi, Al, a large decaf, please, double sugar, three creamers. It looks like the shorter amount of time you give a particle to happen, the bigger it can get, but that doesn’t make sense because I’d think the longer you wait the more likely it’s gonna happen. Thanks, Al.”

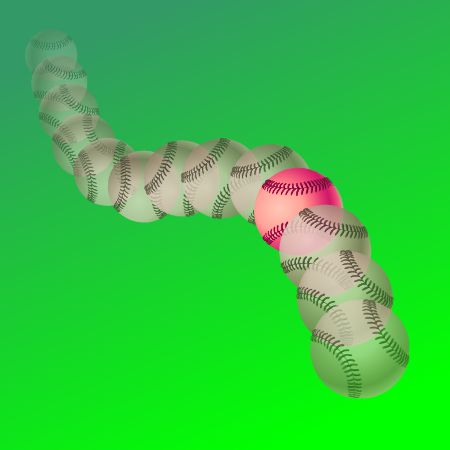

“Take a breath to blow on that coffee, Jeremy, or you’ll burn your tongue. Hmm… Word is your batting average is running about 250 these days. That right?”

“Yessir. I didn’t know you’re keeping track.”

“Keeping my ears open is part of my job. So you’re hitting about once every four at-bats. That gives Coach an estimate of when you’ll get your next hit. What’s your slugging average?”

“What’s a slugging average?”

“Your total number of batted-on bases, divided by your at-bats, times a thousand ’cause sports writers don’t do decimal points. You get one count in the numerator for a single, two for a double and so on.”

“Lemme think. If I’m doing 250 overall and about half are singles and the other half are doubles that’d give me an SA of … about 375.”

“Pretty good. So does that number tell Coach anything about when to expect another double?”

“Mmm, no, but what does that have to do with my virtual particle question?”

“In each case you’ve got a pair of statistics that tell you some things and hide other things. Batting averages and your wait-time notion are about when to expect an event of some sort to occur. You could hit another single or you could tag a homer — all Coach knows is that you should be able to get on base about once every four at-bats.”

“What about the other statistics?”

“They’re the flip side, sort of. You could think of the SA as batting potential. If you hit homers all the time your SA would be 4000. If you whiff every pitch your SA would be zero. Anything between those extremes tells Coach something about your productivity but nothing about when you’re going to produce. Energy uncertainty works the same way for virtual particles. If you’re doing long-duration energy evaluations you can be pretty sure that any single measurement will be close to the long-term average. You might possibly see a significant deviation from that average but only if you check just the right brief interval.”

“And for the particles in that empty space?”

“If you’re looking long-term, no particles. That’s what ’empty’ means. When there’s definitely nothing in a volume of space it makes sense to say its energy is zero because particles have mass and therefore embody energy. But a particle might show up and go away after a very brief interval without significantly affecting that long-term average. Quantum theory doesn’t say it will show up, just that it might.”

“So does it?”

“Oh yes, in space, in the lab and in commerce. One explanation for your cell phone’s NFC function hinges on virtual radio-frequency photons being exchanged between devices.”

“Wait. If a virtual particle shows up in that empty space, then it’s not empty any more and its energy isn’t zero any more, is it?”

“You’ve just discovered one aspect of zero-point energy, the quantum prediction that every system, even empty space, contains a non-zero minimum amount of energy. People have thought about tapping that energy to power perpetual motion machines.”

“That’d be cool — the ultimate renewable.”

“Wouldn’t it, though? But no can do, for a couple of reasons. Virtual particles, by their nature, are random phenomena. You can’t depend upon what kind of particle might show up, or when, nor how long it might hang around. It’s not like NFC where antennas generate the particles. The other issue is that ‘minimum’ means minimum. If you could pull energy out of that space you’d lower its energy content and drop it below the minimum…. What’s the grin about?”

“Just wondering how they’d score hitting a virtual ball that disappears before the fielder catches it.”

~~ Rich Olcott

It all started with Newton’s mechanics, his study of how objects affect the motion of other objects. His vocabulary list included words like force, momentum, velocity, acceleration, mass, …, all concepts that seem familiar to us but which Newton either originated or fundamentally re-defined. As time went on, other thinkers added more terms like power, energy and action.

It all started with Newton’s mechanics, his study of how objects affect the motion of other objects. His vocabulary list included words like force, momentum, velocity, acceleration, mass, …, all concepts that seem familiar to us but which Newton either originated or fundamentally re-defined. As time went on, other thinkers added more terms like power, energy and action. There is another way to get the same dimension expression but things aren’t not as nice there as they look at first glance. Action is given by the amount of energy expended in a given time interval, times the length of that interval. If you take the product of energy and time the dimensions work out as (ML2/T2)*T = ML2/T, just like Heisenberg’s Area.

There is another way to get the same dimension expression but things aren’t not as nice there as they look at first glance. Action is given by the amount of energy expended in a given time interval, times the length of that interval. If you take the product of energy and time the dimensions work out as (ML2/T2)*T = ML2/T, just like Heisenberg’s Area.

For practice using Heisenberg’s Area, what can we say about the atom? (If you’re checking my math it’ll help to know that the Area, h/4π, can also be expressed as 0.5×10-34 kg m2/s; the mass of one hydrogen atom is 1.7×10-27 kg; and the speed of light is 3×108 m/s.) On average the atom’s position is at the cube’s center. Its position range is one meter wide. Whatever the atom’s average momentum might be, our measurements would be somewhere within a momentum range of (h/4π kg m2/s) / (1 m) = 0.5×10-34 kg m/s. A moving particle’s momentum is its mass times its velocity, so the velocity range is (0.5×10-34 kg m/s) / (1.7×10-27 kg) = 0.3×10-7 m/s.

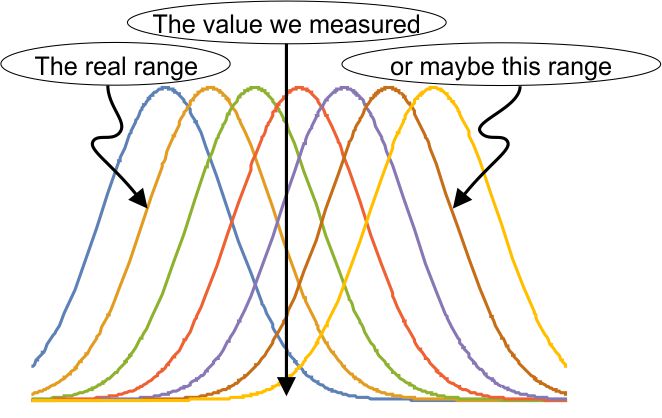

For practice using Heisenberg’s Area, what can we say about the atom? (If you’re checking my math it’ll help to know that the Area, h/4π, can also be expressed as 0.5×10-34 kg m2/s; the mass of one hydrogen atom is 1.7×10-27 kg; and the speed of light is 3×108 m/s.) On average the atom’s position is at the cube’s center. Its position range is one meter wide. Whatever the atom’s average momentum might be, our measurements would be somewhere within a momentum range of (h/4π kg m2/s) / (1 m) = 0.5×10-34 kg m/s. A moving particle’s momentum is its mass times its velocity, so the velocity range is (0.5×10-34 kg m/s) / (1.7×10-27 kg) = 0.3×10-7 m/s. elaborate mathematical structure. If the measurement is a quantum mechanical result, part of that structure is our familiar bell-shaped curve. It’s an explicit recognition that way down in the world of the very small, we can’t know what’s really going on. Most calculations have to be statistical, predicting an average and an expected range about that average. That prediction may or may not pan out, depending on what the experimentalists find.

elaborate mathematical structure. If the measurement is a quantum mechanical result, part of that structure is our familiar bell-shaped curve. It’s an explicit recognition that way down in the world of the very small, we can’t know what’s really going on. Most calculations have to be statistical, predicting an average and an expected range about that average. That prediction may or may not pan out, depending on what the experimentalists find. So there could be a collection of bell-curves gathered about the experimental result. Remember those

So there could be a collection of bell-curves gathered about the experimental result. Remember those