Susan Kim takes a sip of her mocha latte. eyes me over the rim. “That’s quite a set of patterns you’ve gathered together, Sy, but you’ve left out a few important ones.”

“Patterns?”

“Regularities we’ve discovered in Nature. You’ve written about linear and exponential growth, the Logistic Curve that describes density‑limited growth, sine waves that wobble up and down, maybe a couple of others down‑stack, but Chemistry has a couple I haven’t seen featured in your blog.”

“Such as?”

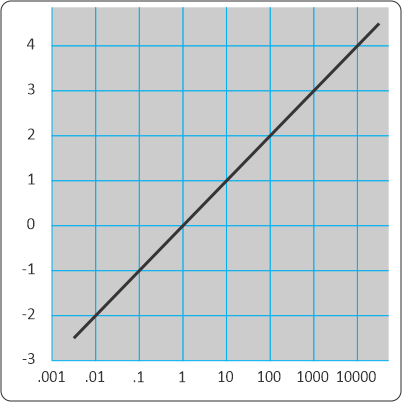

“Log-linear relationships are a biggie. We techies use them a lot to handle phenomena with a wide range. Rather than write 1,000,000,000 or 109, we sometimes just write 9, the base‑10 logarithm. The pH scale for acid concentration is my favorite example. It goes from one mole per liter down to ten micro‑nanomoles per liter. That’s 100 to 10-14. We just drop the minus sign and use numbers between 0 and 14. Fifteen powers of ten. Does Physics have any measurements that cover a range like that?”

“A handful, maybe, in theory. The limitation is in confirming the theory across a billion-fold range or wider. Atomic clocks that are good down to the nanosecond are our standards for precision, but they aren’t set up to count years. Mmmm … the Stefan‑Boltzmann Law that links an object’s electromagnetic radiation curve to its temperature — our measurements cover maybe six or seven powers of ten and that’s considered pretty good.”

“Pikers.” <but I like the way she grins when she says it>

“I took those Chemistry labs long ago. All I remember was acids were colorless and bases were pink. Or maybe the other way around.”

“You’ve got it right for the classic phenolphthalein indicator, but there are dozens of other indicators that have different colors at different acidities. I’ll tell you a secret — phenolphthalein doesn’t kick over right at pH 7, the neutral point. It doesn’t turn pink until the solution’s about ten times less acidic, near pH 8.”

“So all my titrations were off by a factor of ten?”

“Oh, no, that’s not how it works. I’m going to use round numbers here, and I’ll skip a couple of things like the distinction between concentration and activity. Student lab exercises generally use acid and base concentrations on the order of one molar. For most organic acids, that’d give a starting pH near 1 or 2, way over on the sour side. In your titration you’d add base, drop by drop, until the indicator flips color. At that point you conclude the amounts of acid and base are equivalent, not by weight but by moles. If you know the base concentration you can calculate the acid.”

“That’s about what I recall, right.”

“Now consider that last drop. One drop is about 50 microliters. With a one‑molar base solution, that drop holds 50 nanomoles. OK?”

<I scribble on a paper napkin> “Mm-hm, that looks right.”

“Suppose there’s about 50 milliliters of solution in the flask. Because we’re considering the last drop, the solution in the flask must have become nearly neutral, say pH 6. That means the un‑neutralized acid concentration was 10-6 moles per liter, or one micromolar. Fifty milliliters at one micromolar concentration is, guess what, 50 nanomoles. Your final drop neutralizes the last of the acid sample.”

“So the acid concentration goes to zero?”

“Water’s not that cooperative. Water molecules themselves act like acids and bases. An H2O molecule can snag a hydrogen from another H2O giving an H3O+ and an OH–. Doesn’t happen often, but with 55½ moles of water per liter and 6×1023 molecules per mole there’s always a few of those guys hanging around. Neutral water runs 10-7 moles per liter of each, which is why neutral pH is 7. Better yet, the product of H3O+ and OH– concentrations is always 10-14 so if you find one you can calculate the other. Take our titration for example. One additional drop adds 50 nanomoles more base. In 50 milliliters of solution that’s roughly 10-6+10-7 molar OH–. Call it 1.1×10-6, which implies 0.9×10-8 molar H3O+. Log of that and drop the minus sign, you’re a bit beyond pH 8 which sends phenolphthalein into the pink side. Your titration’s good.”

I eye her over my mug of black mud. “A gratifying indication.”

~~ Rich Olcott